|

Riddled as it is with myriad financial nuances, coverage conditions, and four different “parts,” the process of navigating the Medicare bureaucracy may sound daunting, especially if this is your first time dealing with it. But the benefits of understanding the system can help participants get the best care possible—and save a significant amount of money. And when it comes to Medicare Part B, which received a significant overhaul in 2017 that benefits scores of older Americans, it’s critical to understand the scope of what is and isn’t covered. In short, Medicare Part B is the Medicare program that covers doctor bills and other outpatient costs. It’s one of Medicare’s four programs, each identified by a letter: Part A (hospital insurance), Part B (medical insurance), Part C (managed care plans), and Part D (prescription drug coverage). Almost everyone who is 65 and older is eligible for Medicare Part B. Although it will pay part of many participants’ doctor bills and other outpatient costs, it leaves some services uncovered and pays only a portion of those services that it does cover. Participants may need to fill the gaps in coverage—the costs Part B doesn’t pay—with Medigap supplemental insurance, a Part C managed care plan,Medicaid benefits or other sources. Below, we’ve highlighted what you should know about Medicare Part B coverage and enrollment details for you or a loved one. Medicare Part B Explained Any U.S. citizen or legal resident who’s been in the country for five consecutive years is eligible. What’s more, participants aren’t required to have Medicare Part A in order to enroll in Part B. Every individual enrolled in Medicare Part B pays a monthly premium for it (except for people enrolled in Medicaid, which pays the Medicare Part B premium for them). The premium goes up each year on January 1. In 2019, most people will pay $135.50 per person, per month. Single people (or a married person filing a separate tax return) with an adjusted gross income over $85,000 per year pay higher premiums, as do couples whose combined income exceeds $170,000. Medicare bases these calculations on tax returns from two years before. If for any reason participants’ actual income has dropped significantly in the past couple of years, they can contact Medicare with this information and request that their premiums be adjusted accordingly. For those who don’t enroll in Part B when first eligible for it at age 65 but do enroll later, the premiums will be 10 percent higher for every year of delay in enrollment. Does Part B cover doctor bills? The short answer is yes, as doctor bills are probably the biggest chunk of outpatient expenses that are covered by Part B. The category includes any service by a doctor wherever it’s provided—hospital, doctor’s office, clinic, etc. It also covers any other work performed by the doctor’s staff, as well as any drugs administered in the office. That being said, the two basic coverage rules are that care must be medically necessary, and it must be performed by a doctor who accepts Medicare payment. This means that before participants see any new doctor, they must make sure that the doctor accepts Medicare. With many limitations, Part B also covers some care by a chiropractor. This is only for short-term manipulation of out-of-place vertebrae—neck and back—by a Medicare-certified chiropractor. Before seeing a chiropractor, have the chiropractor’s office check directly with Medicare to ensure coverage.

1 Comment

Medicare Part C = Medicare Advantage plans

Medicare Part D = Prescription drug coverage

Medigap plans = Supplemental insurance

The Medicare tax is a payroll tax that applies to all earned income and supports your health coverage when you become eligible for Medicare. The tax is automatically deducted from your paycheck each month and is a tax on your earnings, including wages, tips, certain Railroad Retirement Tax Act (RRTA) benefits, and self-employment earnings that fall above a certain level. There is no minimum income limit, and all individuals who work in the United States must pay the Medicare tax on their earnings. Who pays the Medicare tax? Generally, all employees who work in the U.S. must pay the Medicare tax, regardless of the citizenship or residency status of the employee or employer. In certain limited situations, you may have to pay the Medicare tax on income earned outside of the United States (your employer should be able to confirm if this applies to you). If Medicare taxes are withheld from your paycheck in error, you should contact your employer to ask for a refund. The Medicare tax rate is determined by the IRS and is subject to change. The Federal Insurance Contributions Act, or FICA, tax rate for earned income is 7.65% in 2018/2019, which consists of the Social Security tax (6.2%) and the Medicare tax (1.45%). The Medicare tax is one of the federal taxes withheld from your paycheck if you’re an employee or that you are responsible for paying yourself if you are self-employed. If you are self-employed, you’ll pay a higher tax rate, since you’ll be responsible for paying both the employee portion and the share that is normally paid by your employer. Visit IRS.gov or contact Social Security for the current self-employment tax rate by calling 1-800-772-1213 (TTY 1-800-325-0778), Monday through Friday, from 7AM to 7PM in all U.S. time zones. What is the Additional Medicare Tax? The Affordable Care Act expanded the Medicare payroll tax to include the Additional Medicare Tax. This new Medicare tax increase requires higher wage earners to pay an additional tax (0.9%) on earned income. All types of wages currently subject to the Medicare tax may also be subject to the Additional Medicare Tax. An individual owes Additional Medicare Tax on all cumulative wages, compensation, and self-employment income once the total amount exceeds the threshold for their filing status. 1. Medicaid is the nation’s public health insurance program for people with low income Medicaid is the nation’s public health insurance program for people with low income. The Medicaid program covers 1 in 5 low-income Americans, including many with complex and costly needs for care. The program is the principal source of long-term care coverage for Americans. The vast majority of Medicaid enrollees lack access to other affordable health insurance. Medicaid covers a broad array of health services and limits enrollee out-of-pocket costs. Medicaid finances nearly a fifth of all personal health care spending in the U.S., providing significant financing for hospitals, community health centers, physicians, nursing homes, and jobs in the health care sector. Title XIX of the Social Security Act and a large body of federal regulations govern the program, defining federal Medicaid requirements and state options and authorities. The Centers for Medicare and Medicaid Services (CMS) within the Department of Health and Human Services (HHS) is responsible for implementing Medicaid. 2. Medicaid is structured as a federal-state partnership Subject to federal standards, states administer Medicaid programs and have flexibility to determine covered populations, covered services, health care delivery models, and methods for paying physicians and hospitals. States can also obtain Section 1115 waivers to test and implement approaches that differ from what is required by federal statute but that the Secretary of HHS determines advance program objectives. Because of this flexibility, there is significant variation across state Medicaid programs. The Medicaid entitlement is based on two guarantees: first, all Americans who meet Medicaid eligibility requirements are guaranteed coverage, and second, states are guaranteed federal matching dollars without a cap for qualified services provided to eligible enrollees. The match rate for most Medicaid enrollees is determined by a formula in the law that provides a match of at least 50% and provides a higher federal match rate for poorer states. 3. Medicaid coverage has evolved over time Under the original 1965 Medicaid law, Medicaid eligibility was tied to cash assistance (either Aid to Families with Dependent Children (AFDC) or federal Supplemental Security Income (SSI) starting in 1972) for parents, children and the poor aged, blind and people with disabilites. States could opt to provide coverage at income levels above cash assistance. Over time, Congress expanded federal minimum requirements and provided new coverage options for states especially for children, pregnant women, and people with disabilities. Congress also required Medicaid to help pay for premiums and cost-sharing for low-income Medicare beneficiaries and allowed states to offer an option to “buy-in” to Medicaid for working individuals with disabilities. Other coverage milestones included severing the link. Medicaid eligibility and welfare in 1996 and enacting the Children’s Health Insurance Program (CHIP) in 1997 to cover low-income children above the cut-off for Medicaid with an enhanced federal match rate. Following these policy changes, for the first time states conducted outreach campaigns and simplified enrollment procedures to enroll eligible children in both Medicaid and CHIP. Expansions in Medicaid coverage of children marked the beginning of later reforms that recast Medicaid as an income-based health coverage program. 4. Medicaid covers 1 in 5 Americans and serves diverse populations Medicaid provides health and long-term care for millions of America’s poorest and most vulnerable people, acting as a high risk pool for the private insurance market. In FY 2017, Medicaid covered over 75 million low-income Americans. As of February 2019, 37 states have adopted the Medicaid expansion. Data as of FY 2017 (when fewer states had adopted the expansion) show that 12.6 million were newly eligible in the expansion group. Children account for more than four in ten (43%) of all Medicaid enrollees, and the elderly and people with disabilities account for about one in four enrollees. Medicaid plays an especially critical role for certain populations covering: nearly half of all births in the typical state; 83% of poor children; 48% of children with special health care needs and 45% of nonelderly adults with disabilities (such as physical disabilities, developmental disabilities such as autism, traumatic brain injury, serious mental illness, and Alzheimer’s disease); and more than six in ten nursing home residents. States can opt to provide Medicaid for children with significant disabilities in higher-income families to fill gaps in private health insurance and limit out-of-pocket financial burden. Medicaid also assists nearly 1 in 5 Medicare beneficiaries with their Medicare premiums and cost-sharing and provides many of them with benefits not covered by Medicare, especially long-term care There are several different types of Medicare plans. The choices vary from the services covered to the type of administrators who manage the plan. People who are eligible for Medicare commonly choose the Original Medicare Plan or a Medicare Advantage Plan. The Original Medicare Plan is a fee-for-service plan managed by the federal government. Most people on the Original Medicare Plan have a combination of what is referred to as Part A and Part B benefits. Additionally, recipients of Original Medicare have the option of adding what’s called a Medicare Prescription Drug Plan (or Medicare Part D plan) and purchasing a Medigap or supplemental policy. Medicare Advantage plans are an HMO (Health Maintenance Organization) or PPO (Preferred Provider Organization) that can provide Parts A, B and D coverage. Let’s discuss the most common plans:

1. Individual Health Insurance Plan

Individual Health Insurance Plan is a type of health insurance that covers health expenses of an insured individual. These policies pay for surgical and hospitalisation expenses of an insured individual till the cover limit is reached. The premium for an individual plan is decided on the basis of medical history and the age of the individual buying the plan. 2. Family Floater Health Insurance Plan If a person wants to buy health insurance for his entire family (spouse, children and parents) in a single plan, then he should go for a Family Floater Policy. Any family member covered under the policy can claim in case of hospitalisation and surgical expenses. Like Individual Health Insurance Plan one has to pay a premium for family floater policy. The premium for family floater policy is determined based on the age of the eldest member under the coverage of the policy. 3. Group Health Cover Group Health Insurance plans are bought by an employer for his employees. The premium in group insurance is lower than individual health insurance policy. Group health plans are usually standardised in nature and offer the same benefits to all employees. 4. Senior Citizen Health Insurance At old age, health issues arise that involve expensive treatments. In order to meet such high medical cost, insurance companies have designed special health insurance plans for senior citizens. These plans provide cover to anyone from the age of 65 and above. Generally, the premium is higher in the case of senior citizen health insurance plan as compared to other policies. 5. Critical Illness Health Cover Critical Illness Policy covers the expenses involved in treating the life-threatening diseases like a tumour, permanent paralysis etc. These policies usually pay a lump sum amount to insured person on the diagnosis of serious diseases covered in the policy document. Unlike other policies, Individual Health Insurance and Family Floater Policy, hospitalisation is not required, only the diagnosis of the disease is enough to claim the benefits. 6. Super Top-Up Policy Super Top-Up Plans offer an additional coverage over the regular policy that can help to increase the amount of sum insured. The Super Top-Up Policy can be used only after the sum insured of one’s regular policy is exhausted. For example, if a person has a regular health plan of Rs 3 lakh and a Top-Up plan of Rs 5 lakh. If there is a claim for Rs 5 lakh, then the existing medical policy will pay a claim of Rs 3 lakh and remaining claimed amount of Rs 2 lakh will be covered by the Super Top-Up policy. Conclusion: Health insurance provides financial assistance for non-anticipated medical emergencies. Every individual has different medical needs. Some may need individual health insurance to cover his own medical cost or one would prefer to take a family floater policy to cover medical expenses of his family members in a single policy. Super Top-Up Policy acts as a supplementary policy to the regular/ existing health policy of the insured. Thus, a person should select a health insurance plan after considering his medical needs and requirement. Having the right health insurance benefit for your small business is extremely important. In order to help you find the benefit that fits your needs, we'll go over seven types of health insurance plans. Five of these are traditional group health insurance policies, but we'll also introduce you to alternatives if group health is outside of your budget. Knowing these policy types will prepare you for evaluating options each year as part of your internal small business audit. 1. Preferred Provider Organization (PPO) A PPO plan is a Preferred Provider Organization group health insurance policy. With a PPO plan, employees are encouraged to use a network of preferred doctors and hospitals. These providers are contracted to provide service to plan members at a negotiated or discounted rate. Employees generally aren't required to designate a primary care physician, but will have the choice to see any doctors or specialists within the plans network. Employees have an annual deductible they'll be required to meet before the insurance company begins covering their medical bills. They may also have a copayment for certain services or a co-insurance where they're responsible for a percentage of the total charges of their medical expenses. With a PPO, services rendered outside of the network may result in a higher out-of-pocket cost. A PPO may be a good option for your small business if your employees:

2. Health Maintenance Organization (HMO) Health Insurance Plans An HMO is a Health Maintenance Organization group health insurance policy. With an HMO plan, employees generally have a lower out-of-pocket expense but also have less flexibility in the choice of physicians or hospitals than other plans. An HMO may require employees to choose a primary care physician (PCP). To see a specialist, employees will need to obtain a referral from their PCP. HMOs generally provide coverage for a broader range of preventative services than other policies. Employees may or may not be required to pay a deductible before their coverage starts, and will usually have a copayment. Most of the time, there are no claim forms to file on an HMO. The main thing you will want to keep in mind is that with most HMO plans, employees have no coverage if they go outside of their network without proper authorizations from their PCP or in cases of certain emergency situations. An HMO may be a good option for your small business if you:

3. Point of Service (POS) Health Insurance Plans A POS is a Point of Service group health insurance policy. POS plans combine features of an HMO and a PPO plan. Just like an HMO, POS plans may require employees to choose a Primary Care Physician (PCP) from the plan's network providers. Generally, services rendered by the PCP aren't subject to the policy's deductible. If employees utilize covered services that are rendered or referred by their PCP, they may receive the higher level of coverage. If they utilize services by a non-network provider, they may be subject to a deductible and lower level of coverage. They may also have to pay up-front and submit a claim for reimbursement. A POS may be a good option for your small business if your employees:

4. Exclusive Provider Organization (EPOs) Health Insurance Plans An EPO is an Exclusive Provider Organization group health insurance policy. EPO plans are similar to HMO plans because they have a network of physicians their members are required to use except in the case of emergency. Employee members will have a Primary Care Physician (PCP) who will provide referrals to in-network specialists. EPO members are responsible for small co-payments and may require a deductible. An EPO may be a good option for your small business if you:

5. Indemnity Health Insurance Plans Indemnity health plans are known as fee-for-service plans because of pre-determined amounts or percentages of costs paid to the member for covered services. The member may be responsible for deductibles and co-insurance amounts. In most cases, the member will pay first out of pocket and then file a claim to be reimbursed for the covered amount. An indemnity plan may be a good option for your business if you:

As my otherwise awesome parents are pretty conservative about, well, everything, I don’t want them to know what type of birth control I’m using or when I get tested for STIs and HIV (twice a year, if you’re interested). When I was in college I paid for these things out-of-pocket or put them off until free services were offered at my university because I didn’t want my parents getting a play by play when they saw our health insurance information. Clearly it wasn’t an ideal situation.But a few months ago I learned how to tear myself away from the tyranny of EOBs (Explanation of Benefits), and get some privacy. Here’s how I did it. EOBs are the documents your insurance company sends out that show the basic information about anything your plan helped cover during that statement period, from prescription costs to hospital payments. For those without a health policy background, the Health Insurance Portability and Accountability Act (HIPAA) is designed to protect an individual’s health privacy, but HIPAA rules allow EOBs to go to the “primary enrollee” of an insurance plan (a.k.a. parents, in my case) for billing purposes—as long as only necessary information is included. Different insurance companies have different interpretations of exactly what information is necessary, so EOBs vary in detail from one company to the next. Having any charges or visits related to my sexual health visible to my parents wasn’t something I was comfortable with, so I took action. I called up my insurance company one afternoon and asked them to send my EOBs directly to me. The representative handling my call was incredibly helpful (tip of the hat to Humana!) and immediately changed my contact information and stipulated on the account that only I would be able to see my information, unless I chose to release it to my parents. Now I can take full advantage of the benefits of health insurance (like no copays for birth control under the Affordable Care Act) and make healthcare decisions that are best for me without worrying about my privacy. Not every insurance company will allow information to be withheld from the person purchasing the plan.* In fact, laws can vary state-by-state, so some states might be more protective of your information than others. That could be why my friends from a handful of states across the country had very different experiences from mine when they called their insurance companies. One of them had a particularly awful phone call with a representative who had no idea what the company’s rules were about EOBs or whether she could even change the address for an individual on the plan. Another friend’s company said that because she still lived with her parents, they couldn’t send her EOBs to any other address. Still, considering that Mirena can cost over $500 upfront and the birth control pill can cost over $300 in a year, it’s worth calling up your insurance company to try to change your “privacy settings.” SINGAPORE — Singapore’s health care system is sometimes held up as an example of excellence, and as a possible model for what could come next in the United States. When we published the results of an Upshot tournament on which country had the world’s best health system, Singapore was eliminated in the first round, largely because most of the experts had a hard time believing much of what the nation seems to achieve. It does achieve a lot. Americans have spent the last decade arguing loudly about whether and how to provide insurance to a relatively small percentage of people who don’t have it. Singapore is way past that. It’s perfecting how to deliver care to people, focusing on quality, efficiency and cost. Americans may be able to learn a thing or two from Singaporeans, as I discovered in a recent visit to study the health system, although there are also reasons that comparisons between the nations aren’t apt. A population that is healthier Singapore is an island city-state of around 5.8 million. At 279 square miles, it’s smaller than Indianapolis, the city where I live, and is without rural or remote areas. Everyone lives close to doctors and hospitals. Another big difference between Singapore and the United States lies in social determinants of health. Citizens there have much less poverty than one might see in other developed countries. The tax system is progressive. The bottom 20 percent of Singaporeans in income pay less than 10 percent of all taxes and receive more than a quarter of all benefits. The richest 20 percent pay more than half of all taxes and receive only 12 percent of the benefits. Everyone lives in comparable school systems, and the government heavily subsidizes housing. Rates of smoking, alcoholism and drug abuse are relatively low. So are rates of obesity. Things to like, for the left and right The government’s health care philosophy is laid out clearly in five objectives. In the United States, conservatives may be pleased that one objective stresses personal responsibility and cautions against reliance on either welfare or medical insurance. Another notes the importance of the private market and competition to improve services and increase efficiency. Liberal-leaning Americans might be impressed that one objective is universal basic care and that another goal is cost containment by the government, especially when the market fails to keep costs low enough. Singapore appreciates the relative strengths and limits of the public and private sectors in health. Often in the United States, we think that one or the other can do it all. That’s not necessarily the case. Dr. Jeremy Lim, a partner in Oliver Wyman’s Asia health care consulting practice based in Singapore and the author of one of the seminal books on its health care system, said, “Singaporeans recognize that resources are finite and that not every medicine or device can be funded out of the public purse.” He added that a high trust in the government “enables acceptance that the government has worked the sums and determined that some medicines and devices are not cost-effective and hence not available to citizens at subsidized prices.” In the end, the government holds the cards. It decides where and when the private sector can operate. In the United States, the opposite often seems true. The private sector is the default system, and the public sector comes into play only when the private sector doesn’t want to. In Singapore, the government strictly regulates what technology is available in the country and where. It makes decisions as to what drugs and devices are covered in public facilities. It sets the prices and determines what subsidies are available. “There is careful scrutiny of the ‘latest and greatest’ technologies and a healthy skepticism of manufacturer claims,” Dr. Lim said. “It may be at the forefront of medical science in many areas, but the diffusion of the advancements to the entire population may take a while.” Government control also applies to public health initiatives. Officials began to worry about diabetes, so they acted. School lunches have been improved. Regulations have been passed to make meals on government properties and at government events healthier. In the United States, the American Academy of Pediatrics and the American Heart Association recently called on policymakers to impose taxes and advertising limits on the soda industry. But that’s merely guidance; there’s no power behind it. In Singapore, campaigns have encouraged drinking water, and healthier food choice labels have been mandated. The country, with control over its food importation, even got beverage manufacturers to agree to reduce sugar content in drinks to a maximum of 12 percent by 2020. Should beverage companies fail to comply, officials might not just tax the drinks — they could ban them. What’s really special is the delivery Singapore gets a lot of attention because of the way it pays for its health care system. What’s less noticed is its delivery system. Primary care, which is mostly at low cost, is provided mostly by the private sector. About 80 percent of Singaporeans get such care from about 1,700 general practitioners. The rest use a system of 18 polyclinics run by the government. As care becomes more complicated — and therefore more expensive — more people turn to the polyclinics. About 45 percent of those who have chronic conditions use polyclinics, for example. The polyclinics are a marvel of efficiency. They have been designed to process as many patients as quickly as possible. The government encourages citizens to use their online app to schedule appointments, see wait times and pay their bills. Even so, a major complaint is the wait time. Doctors carry a heavy workload, seeing upward of 60 patients a day. There’s also a lack of continuity. Patients at polyclinics don’t get to choose their physicians. They see whoever is working that day. Care is cheap, however. A visit for a citizen costs 8 Singapore dollars for the clinic fees, a little under $6 U.S. Seeing a private physician can cost three times as much (still cheap in American terms). For hospitalizations, the public vs. private share is flipped. Only about 20 percent of people choose a private hospital for care. The other 80 percent choose to use public hospitals, which are — again — heavily subsidized. People can choose levels of service there (from A to C, as described in an earlier Upshot article), and most choose a “B” level. About half of all care provided in private hospitals is to noncitizens of Singapore. Even for citizens who choose private hospitals, as care gets more expensive, they move to the public system when they can. So Singapore isn’t really a more “private” system. It’s just privately funded. In effect, it’s the opposite of what we have in the United States. We have a largely publicly financed private delivery system. Singapore has a largely privately financed public delivery system. There’s also more granular control of the delivery system. In 1997, there were about 60,000 ambulance calls, but about half of those were not for actual emergencies. What did Singapore do? It declared that while ambulance services for emergencies would remain free, those who called for nonemergencies would be charged the equivalent of $185. Of course, this might cause the public to be afraid to call for real emergencies. But the policy was introduced with intensive public education and messaging. And Singaporeans have identifier numbers that are consistent across health centers and types of care. “The electronic health records are all connected, and data are shared between them,” said Dr. Marcus Ong, the emergency medical services director. “When patients are attended to for an emergency, records can be quickly accessed, and many nonemergencies can be then cleared with accurate information. “By 2010, there were more than 120,000 calls for emergency services, and very few were for nonemergencies.” The good times may not last Singapore made big early health leaps, relatively inexpensively, in infant mortality and increased life expectancy. It did so in part through “better vaccinations, better sanitation, good public schools, public campaigns against tobacco” and good prenatal care, said Dr. Wong Tien Hua, the immediate past president of the Singapore Medical Association. But in recent years, as in the United States, costs have started to rise much more quickly with greater use of modern technological medicine. The population is also aging rapidly. It’s unlikely that the country’s spending on health care will approach that of the United States (18 percent of G.D.P.), but the days of spending significantly less than the global average of 10 percent are probably numbered. Medical officials are also worried that the problems of the rest of the world are catching up to them. They’re worried that diabetes is on the rise. They’re worried that fee-for-service payments are unsustainable. They’re worried hospitals are learning how to game the system to make more money. But they’re also aware of the possible endgame. One told me, “Nobody wants to go down the United States route.” Perhaps most important, the health care system in Singapore seems more geared toward raising up all its citizens than on achieving excellence in a few high-profile areas. Without major commitments to spending, we in the United States aren’t likely to see major changes to social determinants of health or housing. We also aren’t going to shrink the size of our system or get everyone to move to big cities. It turns out that Singapore’s system really is quite remarkable. It also turns out that it’s most likely not reproducible. That may be our loss. Putting a price on longevity or well-being is tricky, but not impossible.

Many people know by now that the United States spends much more on health care than any other country, and that health outcomes are not a lot better (and in many instances worse). That raises the question: Is our health care spending actually worth it? It’s tricky to figure out the extent of the roles that the environment, genetics and social support play in improving health. Nevertheless, the best evidence tells us that health care is still very valuable, even at U.S. prices. Consider this analogy: If you had to choose between no transportation and a new $50,000 luxury car, the car is worth it. This is the U.S. health system. Expensive, but better than nothing. How can such expensive health care be worth it? One piece of the puzzle is connecting health care to longevity. Research published in 1994 by scholars from King’s College in London and Harvard used clinical studies to estimate the effect of medical interventions for common diseases on mortality reduction. With this approach, they estimated that of the 7.5 years of life expectancy gained between 1950 and the early 1990s, three years (or 40 percent) could be attributed to health care. Nearly all of this longevity gain is because of declines in infant mortality and in death from cardiovascular disease. Health care interventions deserve credit for much of both. For example, a study by the Centers for Disease Control and Prevention epidemiologist Earl Ford and colleagues found that half of declines in deaths from coronary heart disease between 1980 and 2000 could be attributed to the health system. But reduced smoking rates also had a substantial effect. Beyond longevity, the King’s College/Harvard study looked at the effect of medical intervention on broader well-being. It found that health care could improve the quality of life for patients with a wide variety of conditions, including unipolar depression, heart disease, osteoarthritis, pain accompanying terminal cancer, peptic ulcers, gallstones, migraines, bone fractures and vision and hearing impairments. Naturally, this work and other studies like it have limitations. For one, even as patients get treatment, they also may make lifestyle changes, whether from a doctor’s encouragement or of their own accord. So some of the improvement in longevity and quality of life that we may attribute to health care could actually be a result of changes in diet, exercise and the like. For another, lifestyle and environmental factors could — and almost certainly do — play a role in causing some of the conditions the health system subsequently treats. In other words, the health system may be quite good at making us healthy, but other things outside the system may have made us sick in the first place. “One big question in figuring out where to direct dollars to improve lives is how effectively we can get people to change behavior,” said Anupam Jena, a physician and an economist who does research at the Harvard Medical School. “It may be true that diet and exercise affect health a great deal, but getting people to make lifestyle changes is sometimes vastly more difficult than getting them to take a pill or undergo a procedure.” Another limitation of studies like this is that they rely, in part, on assumptions and estimates. But careful work can help us gain confidence that they’re not driving the results. For example, according to research by the Harvard economist David Cutler and colleagues, reduced smoking rates and other changes known to have big effects on mortality — like fewer deaths from auto accidents — can explain only 21 percent of the nearly eight-year increase in longevity between 1960 and 2000. So it’s not implausible that the health system could explain a fairly large share of the rest of that gain. Mr. Cutler’s work also compared the life-lengthening benefits of the health system with what it costs. In total, each additional year of life gained between 1960 and 2000 attributed to the health system cost nearly $20,000. When focused just on the same gain for 65-year-olds, the average cost was about $85,000. Both figures are below the typical amounts that society is willing to spend to gain a year of life. There’s no ironclad way to pin down the willingness to pay for a year of life in good health, of course — and it’s quite a controversial subject, as you might imagine — but estimates extend to at least $150,000. It’s findings like these that have led some scholars to argue that health care is so valuable that we should be willing to spend even more on it. Since 2000, we certainly have. In that year, to obtain an additional year of life from the health system for a 65-year-old cost nearly $160,000, more than double what it was in 1980, according to Mr. Cutler’s study. “What we really want to know is, how much does an extra dollar spent on health care buy us today?” Dr. Jena said. “Just as you can overdo it with exercise — jogging six days a week isn’t going to give you appreciably more benefit from jogging five days a week — you can do so with health care.” Studies are mixed on this point: Some suggest that spending more provides substantial additional benefit; some suggest it doesn’t. The answer may depend, in part, on what part of the health system you examine. A recent study in Health Affairs concluded that fewer adverse events from cardiovascular disease explained half of the slowdown in the rate of growth of Medicare spending from 1999 to 2012. And the health system — specifically, medication — accounted for half of that reduction in cardiac events. Another Health Affairs study focused on seven prevalent chronic conditions. The conditions examined — breast and lung cancer, cerebrovascular disease, chronic obstructive pulmonary disease, diabetes, H.I.V./AIDS and ischemic heart disease — are collectively responsible for most of the mortality and serious disease in the population. According to the study, which compared changes in cost and length and quality of life from 1995 to 2015 for people with those conditions, the health system provides good value. So even at American prices, health care is still a bargain. But that doesn’t mean there isn’t also waste in the system. Nor does it mean that a dollar spent on health care today produces more improvements in health and well-being than a dollar spent on other things like improved diet and exercise. That is the opinion of Theodore Marmor, professor of public policy at Yale and author of the book, The Politics of Medicare. Whether you agree with him or not, it is difficult to deny the influence of Medicare and Medicaid on the health care industry. To mark the 50th anniversary of Medicare and Medicaid, signed into law by President Lyndon Johnson on July 30, 1965, we have identified four ways these programs have shaped the health care industry.

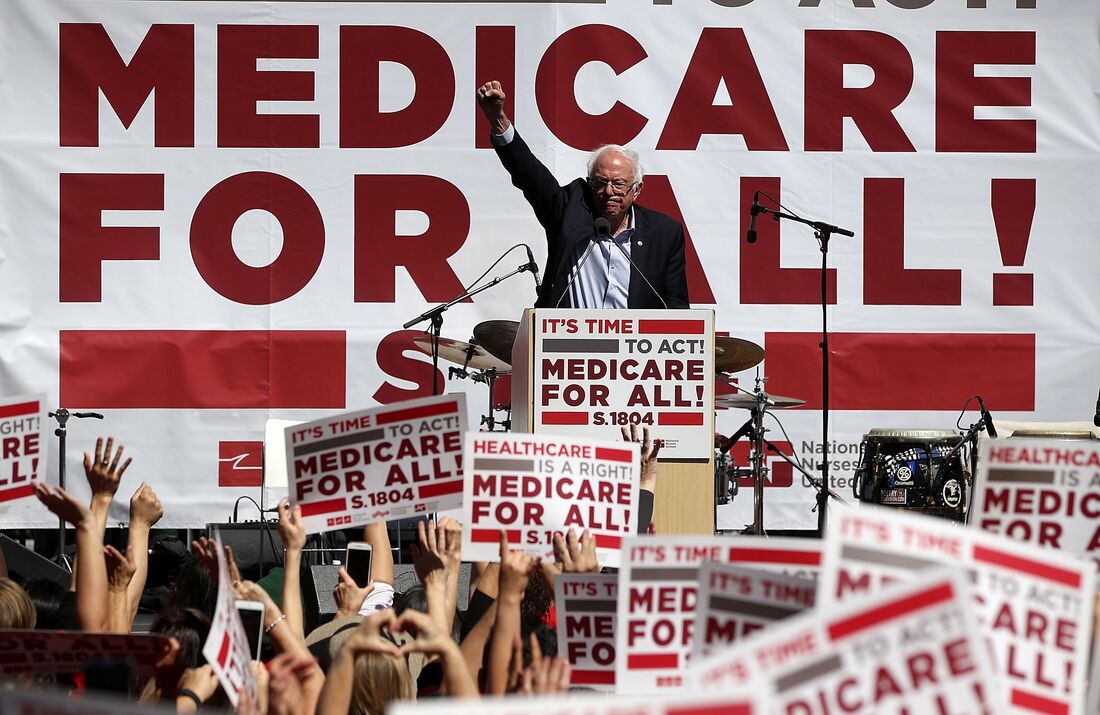

America’s history of healthcare is a bit different than most first world nations. Our staunch belief in capitalism has prevented us from developing the kind of national healthcare the United Kingdom, France, and Canada have used for decades. As a result, we have our own system of sorts that has evolved drastically over the past century and is both loved and hated.

Whichever end of the spectrum you lean toward, there’s no doubt about it: the history of healthcare in America is a long and winding road. How we got to where we are in 2017 is quite a story, so let’s dive in. The History of Healthcare: From the Late 1800’s to Now The Late 1800’sThe earliest formalized records in America’s history of healthcare are dated toward the end of the 19th century. The industrial revolution brought steel mill jobs to many U.S. cities, but the dangerous nature of the work led to more and more workplace injuries. As these manufacturing jobs became increasingly prevalent, their unions grew stronger. In order to shield their union members from catastrophic financial losses due to injury or illness, they began to offer various forms of sickness protection. At the time, there was very little organized structure and most decisions were made on a trial and error basis. The 1900’s: With the turn of the century came a push for organized medicine, led in part by the American Medical Association (AMA), which was growing stronger and gained 62,000 physicians during the coming decade. But because the working class wasn’t supportive of the idea of compulsory healthcare, the U.S. didn’t see the kind of groundswell that leading European nations would see soon after. The 26th President of the United States, Theodore Roosevelt (1901-1909), believed health insurance was important because “no country could be strong whose people were sick and poor.” Even so, he didn’t lead the charge for stronger healthcare in America. Most of the initiative in the early 1900’s was led by organizations outside the government. The 1910’s: One of the organizations heavily involved with advancing healthcare was the American Association of Labor Legislation (AALL), who drafted legislation targeting the working class and low-income citizens (including children). Under the proposed bill, qualified recipients would receive sick pay, maternity benefits, and a death benefit of $50.00 to cover funeral expenses. The cost of these benefits would be split between states, employers, and employees. The AMA initially supported the bill, but some medical societies expressed objections, citing concerns over how doctors would be compensated. The fierce opposition caused the AMA to back down and ultimately pull support for the AALL bill. Not to mention the fact that union leaders feared compulsory health insurance would weaken them, as a portion of their power came from being able to negotiate insurance benefits for union members. As one might expect, the private insurance industry also opposed the AALL Bill because they feared it would undermine their business. If Americans received compulsory insurance through the government, they might not see the need to purchase additional insurance policies privately (especially life insurance), which could put them out of business — or at the very least, cut into their profits. In the end, the AALL bill couldn’t garner enough support to move forward and global events turned Americans' attention toward the war effort. After the start of World War I, Congress passed the War Risk Insurance Act, which covered military servicemen in the event of death or injury. The Act was later amended to extend financial support to the servicemen’s dependents. The War Risk Insurance program essentially ended with the conclusion of the war in 1918, though benefits continued to be paid to survivors and their families. The 1920’s: Post World War I, the cost of healthcare became a more pressing matter as hospitals and physicians began to charge more than the average citizen could afford. Seeing that this was becoming an issue, a group of teachers created a program through Baylor University Hospital where they would agree to pre-pay for future medical services (up to 21 days in advance). The resulting organization was not-for-profit and only covered hospital services. It was essentially the precursor to Blue Cross. The 1930’s: When the Great Depression hit in the 30’s, healthcare started to become a more heated debate. One might believe such conditions would create the perfect climate for compulsory, universal healthcare, but in reality, it did not. Rather, unemployment and “old age” benefits took precedence. While “The Blues” (Blue Cross and Blue Shield) began to expand across the country, the 32nd President of the United States, Franklin Delano Roosevelt (1933-1945), knew healthcare would grow to be a substantial problem, so he got to work on a health insurance bill that included the “old age” benefits so desperately needed at the time. However, the AMA once again fiercely opposed any plan for a national health system, causing FDR to drop the health insurance portion of the bill. The resulting Social Security Act of 1935 created a system of “old-age” benefits and allowed states to create provisions for people who were either unemployed or disabled (or both). The 1940’s: As the U.S. entered World War II after the attack on Pearl Harbor, attention fell from the health insurance debate. Essentially all government focus was placed on the war effort, including the Stabilization Act of 1942, which was written to fight inflation by limiting wage increases. Since U.S. businesses were prohibited from offering higher salaries, they began looking for other ways to recruit new employees as well as incentivizing existing ones to stay. Their solution was the foundation of employer-sponsored health insurance as we know it today. Employees enjoyed this benefit, as they didn’t have to pay taxes on their new form of compensation and they were able to secure healthcare for themselves and their families. After the war ended, this practice continued to spread as veterans returned home and began looking for work. While this was an improvement for many, it left out vulnerable groups of people: retirees, those who are unemployed, those unable to work due to a disability, and those who had an employer that did not offer health insurance. In an effort to not alienate at-risk citizens, some government officials felt it was important to keep pushing for a national healthcare system. The Wagner-Murray-Dingell Bill was introduced in 1943, proposing universal health care funded through a payroll tax. If the history of healthcare thus far could be a lesson for anyone, the bill was faced with intense opposition and eventually drowned in committee. When FDR died in 1945, Harry Truman (1945-1953) became the 33rd President of the United States. He took over FDR’s old national health insurance platform from the mid-30’s, but with some key changes. Truman’s plan included all Americans, rather than only working class and poor citizens who had a hard time affording care — and it was met with mixed reactions in Congress. Some members of Congress called the plan “socialist” and suggested that it came straight out of the Soviet Union, adding fuel to the Red Scare that was already gripping the nation. Once again, the AMA took a hard stance against the bill, also claiming the Truman Administration was towing “the Moscow party line.” The AMA even introduced their own plan, which proposed private insurance options, departing from their previous platform that opposed third-parties in healthcare. Even after Truman was re-elected in 1948, his health insurance plan died as public support dropped off and the Korean War began. Those who could afford it began purchasing health insurance plans privately and labor unions used employer-sponsored benefits as a bargaining chip during negotiations. The 1950’s: As the government became primarily concerned with the Korean War, the national health insurance debate was tabled, once again. While the country tried to recover from its third war in 40 years, medicine was moving forward. It could be argued that the effects of Penicillin in the 40’s opened people’s eyes to the benefits of medical advancements and discoveries. In 1952, Jonas Salk’s team at the University of Pittsburgh created an effective Polio vaccine, which was tested nationwide two years later and was approved in 1955. During this same time frame, the first organ transplant was performed when Dr. Joseph Murray and Dr. David Hume took a kidney from one man and successfully placed it in his twin brother. Of course, with such leaps in medical advancement, came additional cost — a story from the history of healthcare that is still repeated today. During this decade, the price of hospital care doubled, again pointing to America’s desperate need for affordable healthcare. But in the meantime, not much changed in the health insurance landscape. The 1960’s: By 1960, the government started tracking National Health Expenditures (NHE) and calculated them as a percentage of Gross Domestic Product (GDP). At the start of the decade, NHE accounted for 5 percent of GDP. When John F. Kennedy (1961-1963) was sworn in as the 35th President of the United States, he wasted no time at all on a healthcare plan for senior citizens. Seeing that NHE would continue to increase and knowing that retirees would be most affected, he urged Americans to get involved in the legislative process and pushed Congress to pass his bill. But in the end, it failed miserablyagainst harsh AMA opposition and again — fear of socialized medicine. After Kennedy was assassinated on November 22, 1963, Vice President Lyndon B. Johnson (1963-1969) took over as the 36th President of the United States. He picked up where Kennedy left off with a senior citizen’s health plan. He proposed an extension and expansion of the Social Security Act of 1935, as well as the Hill-Burton Program (which gave government grants to medical facilities in need of modernization, in exchange for providing a “reasonable” amount of medical services to those who could not pay). Johnson’s plan focused solely on making sure senior and disabled citizens were still able to access affordable healthcare, both through physicians and hospitals. Though Congress made hundreds of amendments to the original bill, it did not face nearly the opposition that preceding legislation had — one could speculate as to the reason for its easier path to success, but it would be impossible to pinpoint with certainty. It passed the House and Senate with generous margins and went to the President’s desk. Johnson signed the Social Security Act of 1965 on July 30 of that year, with President Harry Truman sitting at the table with him. This bill laid the groundwork for what we now know as Medicare and Medicaid. The 1970’s: By 1970, NHE accounted for 6.9 percent of GDP, due in part to “unexpectedly high” Medicare expenses. Because the U.S. had not formalized a health insurance system (it was still just people who could afford it buying insurance), they didn’t really have any idea how much it would cost to provide healthcare for an entire group of people — especially an older group who is more likely to have health problems. Nevertheless, this was quite a leap in a ten year time span, but it wouldn’t be the last time we’d see such jumps. This decade would mark another push for national health insurance — this time from unexpected places. Richard Nixon (1969-1974) was elected the 37th President of the United States in 1968. As a teen, he watched two brothers die and saw his family struggle through the 1920’s to care for them. To earn extra money for the household, he worked as a janitor. When it came time to apply for colleges, he had to turn Harvard down because his scholarship didn’t include room and board. Entering the White House as a Republican, many were surprised when he proposed new legislature that strayed from party lines in the healthcare debate. With Medicare still fresh in everyone’s minds, it wasn’t a stretch to believe additional healthcare reform would come hot on its heels, so members of Congress were already working on a plan. In 1971, Senator Edward (Ted) Kennedy proposed a single-payer plan (a modern version of a universal, or compulsory system) that would be funded through taxes. Nixon didn’t want the government reaching so far into Americans’ lives, so he proposed his own plan, which required employers to offer health insurance to employees and even provided subsidies to those who had trouble affording the cost. You can read more about the history of employer-sponsored healthcare by downloading our free guide below. Nixon believed that basing a health insurance system in the open marketplace was the best way to strengthen the existing makeshift system of private insurers. In theory, this would have allowed the majority of Americans to have some form of health insurance. People of working age (and their immediate families) would have insurance through their employers and then they’d be on Medicare when they retired. Lawmakers believed the bill satisfied the AMA because doctors’ fees and decisions would not be influenced by the government. Kennedy and Nixon ended up working together on a plan, but in the end, Kennedy buckled under pressure from unions and he walked away from the deal — a decision he later said was “one of the biggest mistakes of his life.” Shortly after negotiations broke down, Watergate hit and all the support Nixon’s healthcare plan had garnered completely disappeared. The bill did not survive his resignation and his successor, Gerald Ford (1974-1977) distanced himself from the scandal. However, Nixon was able to accomplish two healthcare-related tasks. The first was an expansion of Medicare in the Social Security Amendment of 1972 and the other was the Health Maintenance Organization Act of 1973 (HMO), which established some order in the healthcare industry chaos. But by the end of the decade, American medicine was considered to be in “crisis,” aided by an economic recession and heavy inflation. The 1980’s: By 1980, NHE accounted for 8.9 percent of GDP, an even larger leap than the decade prior. Under the Reagan Administration (1981-1989), regulations loosened across the board and privatization of healthcare became increasingly common. In 1986, Reagan signed the Consolidated Omnibus Budget Reconciliation Act (COBRA), which allowed former employees to continue to be enrolled in their previous employer’s group health plan — as long as they agreed to pay the full premium (employer portion plus employee contribution). This provided health insurance access to the recently unemployed who might have otherwise had difficulty purchasing private insurance (due to a pre-existing condition, for example). The 1990’s: By 1990, NHE accounted for 12.1 percent of GDP — the largest increase thus far in the history of healthcare. Like others before him, the 42nd President of the United States, Bill Clinton (1993-2001), saw that this rapid increase in healthcare expenses would be damaging to the average American and attempted to take action. Shortly after being sworn in, Clinton proposed the Health Security Act of 1993. It proposed many similar ideas to FDR and Nixon’s plans — a mix of universal coverage while respecting the private insurance system that had formed on its own in the absence of legislation. Individuals could purchase insurance through “state-based cooperatives,” companies could not deny anyone based on a pre-existing condition, and employers would be required to offer health insurance to full-time employees. Multiple issues stood in the way of the Clinton plan, including foreign affairs, the complexity of the bill, an increasing national deficit, and opposition from big business. After a period of debate toward the end of 1993, Congress left for winter recess with no conclusions or decisions, leading to the bill’s quiet death. In 1996, Clinton signed the Health Insurance Portability and Accountability Act (HIPAA), which established privacy standards for individuals. It also guaranteed that a person’s medical records would be available upon their request and placed restrictions on how pre-existing conditions were treated in group health plans. The final healthcare contribution from the Clinton Administration was part of the Balanced Budget Act of 1997. It was called the Children’s Health Insurance Program (CHIP) and it expanded Medicaid assistance to “uninsured children up to age 19 in families with incomes too high to qualify them for Medicaid.” CHIP is run by each individual State and is still in use today. In the meantime, employers were trying to find ways to cut back on healthcare costs. In some cases, this meant offering HMOs, which by design, are meant to cost both the insurer and the enrollee less money. Typically this includes cost saving measures, such as narrow networks and requiring enrollees to see a primary care physician (PCP) before a specialist. Generally speaking, insurance companies were trying to gain more control over how people received healthcare. This strategy worked overall — the 90’s saw slower healthcare cost growth than previous decades. The 2000’s: By the year 2000, NHE accounted for 13.3 percent of GDP — just a 1.2 percent increase over the past decade. When George W. Bush (2001-2009) was elected the 43rd President of the United States, he wanted to update Medicare to include prescription drug coverage. This idea eventually turned into the Medicare Prescription Drug, Improvement and Modernization Act of 2003(sometimes called Medicare Part D). Enrollment was (and still is) voluntary, although millions of Americans use the program. The history of healthcare slowed down at that point, as the national healthcare debate was tabled while the U.S. focused on the increased threat of terrorism and the second Iraq War. It wasn’t until election campaign mumblings began in 2006 and 2007 that insurance worked its way back into the national discussion. When Barack Obama (2009-2017) was elected the 44th President of the United States in 2008, he wasted no time getting to work on health care reform. He worked closely with Senator Ted Kennedy to create a new healthcare law that mirrored the one Kennedy and Nixon worked on in the 70’s. Like Nixon’s bill, it mandated that applicable large employers provide health insurance, in addition to requiring that all Americans carry health insurance, even if their employer did not offer it. The bill would establish an open Marketplace, on which insurance companies could not deny coverage based on pre-existing conditions. American citizens earning less than 400 percent of the poverty level would qualify for subsidies to help cover the cost. It wasn’t universal or single-payer coverage, but instead used the existing private insurance industry model to extend coverage to millions of Americans. The bill circulated the House and the Senate for months, going through multiple revisions, but ultimately, passed and moved to the President’s desk. 2010: To NowWhile the country focused on the second Iraq War, the cost of healthcare took another leap. By 2010, NHE accounted for 17.4 percent of GDP. This period of time would bring a new, but divisive chapter in the history of healthcare in America. On March 23, 2010, President Obama signed the Patient Protection and Affordable Care Act (PPACA), commonly called the Affordable Care Act (ACA) into law. Because the law was complex and the first of its kind, the government issued a multi-year rollout of its provisions. In theory, this should have helped ease insurance companies (and individuals) through the transition, but in practice, things weren’t so smooth. The first open enrollment season for the Marketplace started in October 2013 and it was rocky, to say the least. Nevertheless, 8 million people signed up for insurance through the ACA Marketplace during the first open enrollment season. The numbers increased to 11.7 million in 2015 and it’s estimated that the ACA has covered an average of 11.4 million annually ever since. It’s no secret that the ACA was met with heavy opposition for a variety of reasons (the individual mandate and the employer mandate being two of the most hotly contested). Some provisions were even taken before the Supreme Court on the basis of constitutionality. In addition, critics highlighted the problems with healthcare.gov as a sign this grand “socialist” plan was destined to fail. Regardless of the controversy, it could be argued that the most helpful part of the ACA was its pre-existing condition clause. Over the course of the 20th century, insurance companies began denying coverage to individuals with pre-existing conditions, such as asthma, heart attacks, strokes, and AIDS. The exact point when pre-existing conditions were cited in the history of our healthcare is debatable, but very possibly, it occurred as for-profit insurance companies popped up across the landscape. Back in the 20’s, not-for-profit Blue Cross charged the same amount, regardless of age, sex, or pre-existing condition, but eventually, they changed their status to compete with the newcomers. And as the cost of healthcare increased, so did the number of people being denied coverage. Prior to the passing of the ACA, it’s estimated that one in seven Americans were denied health insurance because of a pre-existing condition, the list of which was extensive and often elusive, thanks to variations between insurance companies and language like “including, but not limited to the following.” In addition, the ACA allowed for immediate coverage of maternal and prenatal care, which had previously been far more restrictive in private insurance policies. Usually, women had to pay an additional fee for maternity coverage for at least 12 months prior to prenatal care being covered — otherwise, the pregnancy was viewed as a pre-existing condition and services involving prenatal care (bloodwork, ultrasounds, check-ups, etc) were not included in the policy. The Future of Healthcare: History Shall Repeat ItselfSince Donald Trump was sworn in as the 45th President of the United States on January 20, 2017, many have been questioning what will happen with our healthcare system — specifically, what will happen to the ACA, since Trump ran on a platform of “repealing and replacing” the bill. As open enrollment for 2017 drew to a close, it became apparent to lawmakers that either repealing or replacing the ACA would be no easy task. If they were to repeal the bill, what would happen to the 11 million Americans currently insured through the Marketplace? If they come up with a replacement plan, what does that look like? What changes would be made? Will there be a Marketplace? Will insurance companies be able to deny coverage based on pre-existing conditions again? There are plenty of questions that need answered and it doesn’t seem like a decision will be reached anytime soon, but one thing is for sure: changes will be made. What those changes will be depends on Congress, Trump, the new Secretary of Health and Human Services, Tom Price — and you, the voter. The history of healthcare in America will continue to evolve and it will be interesting to see where this administration takes us and the affects their plan will have on Americans. Regardless, we’ll have to keep an eye on the national health expenditure numbers. According to the latest data available, NHE was 17.8 percent of GDP in 2015, signaling slower growth than the previous decade, but only time will tell the full story. Reputable credit counseling organizations advise you on managing your money and debts, help you develop a budget, and usually offer free educational materials and workshops. Their counselors are certified and trained in the areas of consumer credit, money and debt management, and budgeting. A reputable credit counseling agency should send you free information about the services it provides without requiring you to provide any details about your situation. If a firm doesn’t do that, consider it a red flag and go elsewhere for help. When you’re choosing a credit counseling agency, check for the following:

Beware of companies that guarantee they can remove unsecured debt, that promise that unsecured debts can be paid off with pennies on the dollar, or that demand payment of a percentage of your savings. Reliable credit counselors help people manage their money better and, if appropriate, can help get better interest rates on loans and set up a realistic repayment plan that is acceptable to creditors. They cannot “fix” bad credit or pay off debts for less than is owed. If it looks too good to be true, then it probably is. In these difficult economic times, many of today’s college students are turning to credit cards to finance their college education, using them for everything from everyday necessities to books and tuition. Unfortunately, this can result in an excessive amount of debt. Young people are frequently unaware that their bill paying habits will affect their credit history. Many graduates do not think they need to worry about their credit score until they apply for a mortgage to buy a house. So it can come as a shock when they find out that potential landlords, employers and even utilities companies routinely access credit scores as part of their application process. Learning how to manage student loans, credit cards and other debt is essential for college students. Establishing financial skills early on and working to build a good credit standing will affect their lives both now and in the future. A person’s credit history begins with their first credit card. And good credit can help savvy college graduates save money in the following situations:

Insurance scores vs. credit scores Insurance scores are different from credit scores and it is important to understand the distinction. Your credit score is a number that represents your overall credit worthiness; predicting the likelihood of delinquency or non-payment of credit obligations. It encompasses everything you have ever done credit-wise, from your very first credit card to the bills that you pay. Whether you are buying a house, applying for a credit card or looking to purchase a car, your credit score will factor into these decisions. Your insurance score, on the other hand, is based in part on your credit score, but also includes other factors pertaining to your insurance history. For example, with auto insurance, information about age, gender, income, the number of car insurance claims you have made, Department of Motor Vehicles points, your timeliness with payments, etc. all factor into the equation that determines your score. Insurers use this score to determine whether you are a good risk to insure. Developing a financial plan In order to develop a good credit rating, parents and students need to work together on a financial plan for college from the beginning. Specific educational expenses including tuition, room and board, and books and fees can be viewed as “good debt” and can be covered through student loans, grants and the like. Day-to-day college expenses, including personal needs, transportation costs, telephone and other incidentals, are the types of expenses that students should endeavor not to charge on credit cards. In most cases, college is the first opportunity for young people to make independent financial judgments. Carrying high, unpaid balances is one of the quickest ways to incur too much debt and fall behind in payments. If college students plan to use a credit card regularly, they should have limits and know ahead of time where the money will come from to pay the bill at the end of the month. When deciding on a credit card, students should read the fine print and shop around for the best terms. Look for cards that:

How to improve your credit score if it has been damaged

|

Author

Archives

January 2020

|